A finance executive at a global firm joins a video call. He sees his CFO on screen. Hears his voice. Gets instructions to wire $25 million to a vendor account. He does it. The CFO was never on that call. It was a deepfake, and by the time anyone noticed, the money was gone.

That’s not a hypothetical. That’s what happened to Arup, the British engineering firm, in Hong Kong. And it’s the clearest sign yet that we’re not dealing with the same old cybercrime anymore.

So what exactly changed in 2026?

Everything, basically. The attacks got smarter. Faster. More convincing. And your old defenses? A lot of them are quietly failing.

The Numbers Tell a Brutal Story

Let’s start with the data, because the scale of this is genuinely alarming.

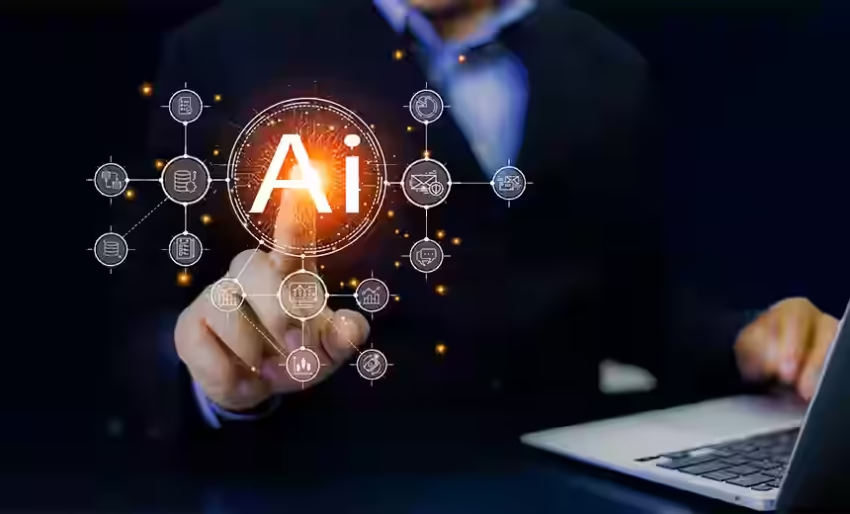

According to the IBM X-Force Threat Intelligence Index 2026, AI-powered cyberattacks rose 72% year-over-year. That’s not a blip. That’s a trend that’s been accelerating since criminals got access to powerful language models and started building tools on top of them.

The WEF Global Cybersecurity Outlook 2026 found that 87% of global organizations were hit by AI-enabled attacks in 2025. Think about that. If you’re running a business of any size, the odds that you’ve already been targeted are overwhelming.

Cybercrime is now projected to cost the world $10.5 trillion in 2026, according to industry estimates compiled by SentinelOne. And that number keeps climbing.

Here’s what makes the current wave particularly hard to fight:

- AI-generated phishing emails now arrive every 19 seconds (Cofense Threat Intelligence)

- Automated scanning attempts hit 36,000 per second globally (Fortinet Global Threat Report)

- AI-driven credential theft rose 160% in 2025, with over 14,000 breaches per month

- The average ransomware payment in 2025 hit $1.13 million

- 76% of detected malware now shows signs of AI-driven polymorphism, meaning it rewrites itself to dodge detection

And if you’re operating in India? SentinelOne’s statistics show Indian organizations face 3,195 cyberattacks per week in 2026, which is 62% above the global average. That’s not a footnote. That’s a crisis.

How AI Attacks Work Now?

This is where it gets technically interesting, and honestly, a little unsettling.

Polymorphic Malware

Traditional antivirus software works by recognizing the “signature” of a known threat. It’s like a bouncer checking faces against a list of known troublemakers. Polymorphic malware laughs at that system.

AI tools now let malicious code mutate constantly. Every time it replicates, it changes its code structure, making it unrecognizable to signature-based scanners. The IBM X-Force 2026 Index confirms that 76% of current malware exhibits this behavior. Named families like MalTerminal and LameHug are already in the wild, and they’re getting more sophisticated by the month.

AI Phishing

Remember when you could spot a phishing email because it said “Dear Valued Customer” or promised a Nigerian prince’s inheritance? Those days are gone.

Modern AI phishing tools generate hyper-personalized emails that reference your LinkedIn job history, your company’s recent press releases, and your name. They mimic your CEO’s writing style. They know your vendor relationships. The click-through rate for AI-crafted phishing emails is now 54% compared to just 12% for human-written ones, per analysis compiled by AllAboutAI. That’s not a small gap. That’s a completely different threat category.

And WormGPT, a dark LLM (large language model) sold on criminal forums, makes this accessible to anyone willing to pay for it. No coding skills required.

Deepfake Fraud

The Arup case was the landmark, but it’s far from isolated. 85% of organizations report being targeted by deepfake-related attacks in the past year, according to a DeepStrike industry survey. Voice cloning now requires just a few seconds of audio. Video deepfakes are convincing enough to fool trained professionals on a live call.

This has given rise to what’s now called BEC 3.0 (Business Email Compromise, third generation): fake audio and video layered on top of spoofed emails, targeting financial approvals, credential resets, and executive impersonations. The FBI’s Internet Crime Complaint Center reported a 37% rise in AI-assisted BEC attacks, contributing to a staggering $16.6 billion in cybercrime losses in 2024 alone.

Agentic AI Attacks

This one’s newer, and it scares security researchers more than almost anything else right now.

Agentic AI systems are autonomous programs that can be given a goal and then figure out how to achieve it without human guidance. In the wrong hands, that means an AI agent that independently conducts reconnaissance on a target network, maps its vulnerabilities, moves laterally through systems, and exfiltrates data, all without a human attacker ever touching the keyboard.

Barracuda Networks’ 2026 research highlights agentic AI as an emerging frontier threat. The OWASP Top 10 for Agentic Applications 2026 now lists prompt injection as a primary attack vector, where malicious instructions hidden inside content that AI tools read can hijack the agent’s behavior entirely.

Real Attacks, Real Losses

The numbers are one thing. The actual incidents hit differently.

The Arup Deepfake Heist ($25.6M): Already described above, but worth reinforcing. A deepfake video call convinced a finance worker to authorize a transfer. No malware involved. No hacking. Just a convincing fake human on a screen.

North Korean IT Worker Schemes: North Korean operatives, now reportedly using AI tools to fake identities and technical credentials, have been infiltrating tech companies as remote contractors. Once inside, they gather intelligence or plant backdoors. The FBI has issued multiple warnings about this tactic in 2025 and 2026.

PromptLock and PromptSteal: These AI-specific malware families target organizations that have deployed AI assistants internally. By injecting hidden instructions into documents the AI reads, attackers can redirect the AI’s behavior, steal data it has access to, or use it as a pivot point into deeper systems. It’s a new attack surface that most security teams haven’t even started thinking about.

GoldFactory: A mobile banking malware group that uses AI-generated interfaces to create fake bank login screens so convincing that even security researchers have had trouble distinguishing them from the real thing.

Why Your Current Defenses Are Probably Failing?

Here’s the hard conversation. A lot of what you’re probably relying on right now was built for a different era.

Signature-Based Detection Can’t Keep Up

As we established, polymorphic malware rewrites itself constantly. Signature scanning compares code to a list of known bad patterns. When the code is new every time, that list is useless. This isn’t a flaw in the tools. It’s a fundamental architectural problem that AI-powered malware was specifically designed to exploit.

MFA Isn’t the Safety Net It Used to Be

Multi-factor authentication is still important. But standard push-notification MFA is now routinely bypassed through session hijacking and adversary-in-the-middle attacks. Attackers intercept the authentication token after you’ve approved it, effectively stealing your logged-in session without ever needing your password or your one-time code.

Shadow AI Is an Open Door

Here’s a risk most companies aren’t tracking: their own employees. When workers use unapproved AI tools, such as third-party chatbots, browser-based AI writing assistants, or AI-powered code generators, they often paste sensitive internal data into those tools without realizing where that data goes. IBM’s research shows 13% of companies in 2025 experienced incidents linked to shadow AI. And 97% of organizations hit by AI-related incidents lacked proper AI access controls, according to IBM Think.

Shadow AI isn’t an IT problem. It’s a boardroom problem, and most boards don’t know it’s happening.

Identity Is the New Perimeter

The IBM X-Force 2026 Index flagged something that security professionals have been quietly noting for years: attackers don’t break in anymore. They log in. With credential theft rising 160%, phishing becoming undetectable, and session hijacking bypassing MFA, identity has become the primary attack vector. Traditional perimeter defenses like firewalls assume you’ve already kept the bad actor outside. That assumption is now dangerously outdated.

How to Defend Your Organization in 2026?

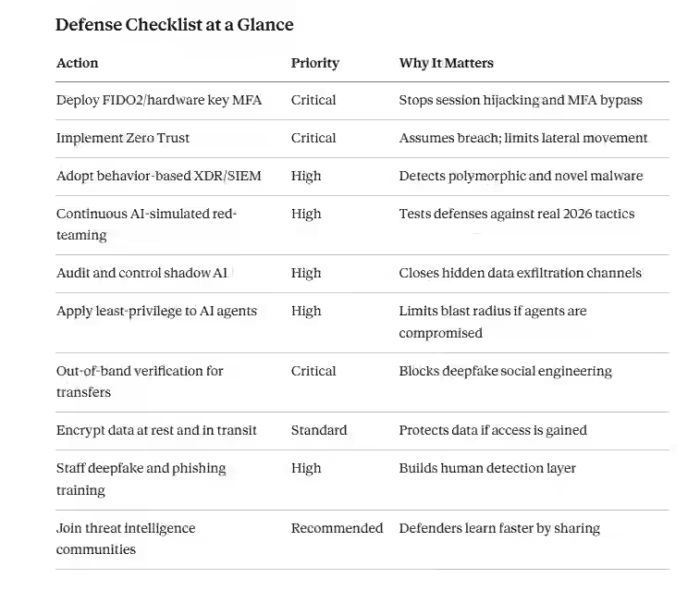

This isn’t a list of generic advice. These are the specific actions security experts are recommending right now, with clear reasons behind each one.

Step 1: Upgrade to Phishing-Resistant MFA

Ditch SMS-based and push-notification MFA for anything sensitive. Move to FIDO2 hardware security keys (like YubiKeys) or passkey-based authentication. These are cryptographically bound to the actual login device and can’t be intercepted mid-session. This one change closes one of the most exploited entry points in 2026.

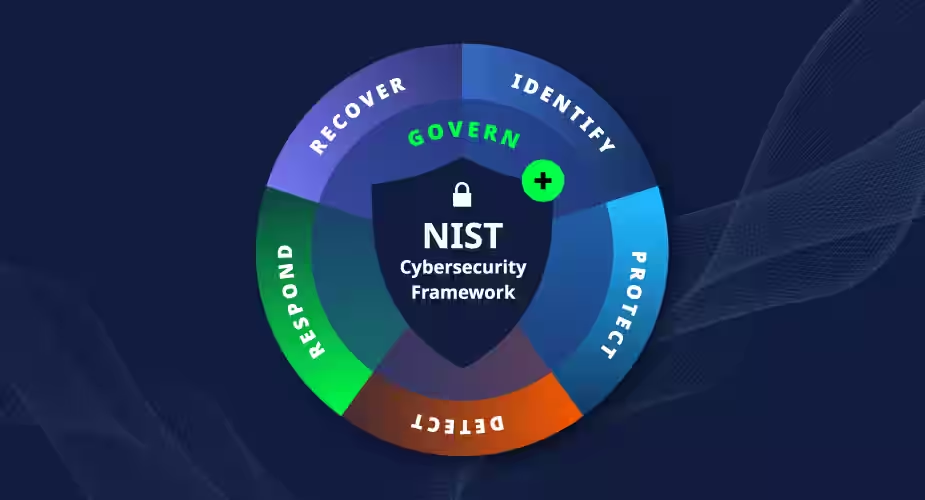

Step 2: Adopt Zero Trust Architecture

Zero Trust means you verify every user, every session, every device, with no exceptions, even for people already inside your network. The old model trusted anything inside the firewall. Zero Trust assumes breach and acts accordingly. This isn’t just a tech upgrade; it’s a philosophy shift. Start with identity verification and work outward.

Step 3: Switch to Behavior-Based Threat Detection

Move from signature-based antivirus to behavior-based detection systems. Modern XDR (Extended Detection and Response) and SIEM (Security Information and Event Management) platforms watch for what a process is doing, not just what it looks like. An AI agent quietly mapping your network structure looks suspicious in behavioral terms, even if its code is brand new. SentinelOne and similar platforms have built this capability specifically for the AI-threat era.

Step 4: Run Continuous Red-Team Exercises

Annual penetration testing is not enough anymore. Attackers are iterating daily. Your defenses should be tested just as frequently. Schedule continuous red-teaming that specifically simulates AI-driven attack patterns: automated scanning, AI phishing, deepfake social engineering, and agentic lateral movement. If your red team isn’t using AI tools to attack you, they’re not testing against the real threat.

Step 5: Lock Down Shadow AI

Start with a full audit: what AI tools are your employees actually using? Most organizations are genuinely surprised by the answer. Build a clear policy, create an approved list with privacy-safe tools, and make it easy for employees to do the right thing. Banning AI outright doesn’t work in 2026. But letting it run unchecked is a serious liability.

Step 6: Apply Least-Privilege to AI Agents

If your organization uses AI agents internally (and many are starting to), treat them exactly like external users. They should have access only to what they specifically need, and nothing more. An AI agent that can read your entire email archive, company financials, and HR records is a massive liability if compromised. OWASP’s 2026 guidance on agentic applications makes this point explicitly: AI agents need strict permission boundaries.

Step 7: Establish Out-of-Band Verification for High-Value Requests

Any request involving money movement, credential changes, or access escalation should require a second verification channel. If you get an email from your CEO asking to wire funds, call them back on a number you already have on file. Not one was provided in the email. This simple process killed the Arup attack vector before it was used. Build it into your workflow as a non-negotiable step.

Step 8: Train Staff on Deepfake Recognition and New Social Engineering

Your people are your most important defense layer, and also your most targeted one. Run training that specifically covers deepfake video and audio, AI-crafted phishing that looks nothing like the old stuff, and the new BEC playbook. Teach the out-of-band verification habit. Make “call and confirm” a cultural norm, not a paranoid exception.

Here’s How You Stay in It?

The WEF’s 2026 Outlook frames this well: we’re in an AI security arms race. Attackers use AI to attack faster, at scale, with more convincing tactics. Defenders use AI to detect, respond, and adapt faster than any human team could manage alone. Neither side is standing still.

I think the key mindset shift for 2026 is this: stop thinking about cybersecurity as a product you buy and start thinking about it as a practice you maintain. The UK’s National Cyber Security Centre (NCSC) actually takes a more measured view than some of the alarmist coverage, noting that fully autonomous AI attacks at scale may still be limited before 2027. But the trajectory is clear. The speed of iteration on the attacker side is accelerating.

Michael Freeman, Head of Threat Intelligence at Armis, has pointed out that agentic AI is changing the cost-benefit calculation for cybercriminals. Attacks that previously required a team of skilled operators can now be launched by one person with access to the right tools. That shift alone explains the 72% year-over-year rise we’re seeing.

Steve Stone, SVP at SentinelOne, has made a similar observation about the defender side: AI-powered detection is now finding threats that human analysts would miss, simply because the volume and speed exceed human capacity to process it.

The $10.5 trillion figure is staggering, but it’s also a measure of the stakes. For every dollar attackers make, someone else is losing it. The organizations that survive this era will be the ones that take defense as seriously as the attackers take offense.

We’re all in this now. The question is whether your organization is treating it that way.

FAQs:

Criminals use AI to write convincing phishing emails, clone voices, create deepfake videos, build self-mutating malware, and launch autonomous attacks that require little to no human involvement.

Polymorphic malware rewrites its own code constantly, making it invisible to traditional antivirus tools. Since 76% of detected malware now behaves this way, signature-based defenses are largely ineffective against it.

AI phishing is hyper-personalized, grammatically perfect, and mimics real people and contexts. It achieves a 54% click-through rate, compared with 12% for human-written attacks, making it dramatically harder to spot.

Deepfake attacks use AI to clone voices or fabricate videos of real people. Attackers impersonate executives to authorize transfers or access changes, as seen in the $25.6M Arup heist.

Agentic AI operates autonomously toward a goal without human input. In attacks, it independently scouts networks, exploits vulnerabilities, and steals data, making it faster and harder to detect than human-led intrusions.

Yes. Zero Trust verifies every user and device continuously, limiting how far attackers move after gaining access. Since AI attacks increasingly exploit stolen identities, removing automatic trust directly counters that strategy.

- Cloud Cost Management in 2026: FinOps Strategies That Work - April 5, 2026

- Top 10 Fintech Trends Dominating April 2026 - April 4, 2026

- Ultimate Guide to Fintech Tools for Businesses 2026 - April 3, 2026