Last Updated on 2 days ago

The global edge computing market is sitting somewhere between $82 billion and $258 billion right now, depending on how broadly you measure it. What every analyst agrees on is the direction: up, fast, and not slowing. With 5G rollouts maturing, AI inference moving closer to the data source, and data sovereignty laws tightening across the EU, India, and beyond, edge platforms have gone from “interesting option” to “boardroom-level decision.”

So which platform is actually right for you? That depends heavily on who you are. A DevOps engineer running Kubernetes workloads has different needs than a manufacturing OT engineer worried about offline uptime. This post walks through the 10 most relevant edge computing platforms in 2026 with that specificity in mind, including what each one does well, where it falls short, and the use case it was genuinely built for.

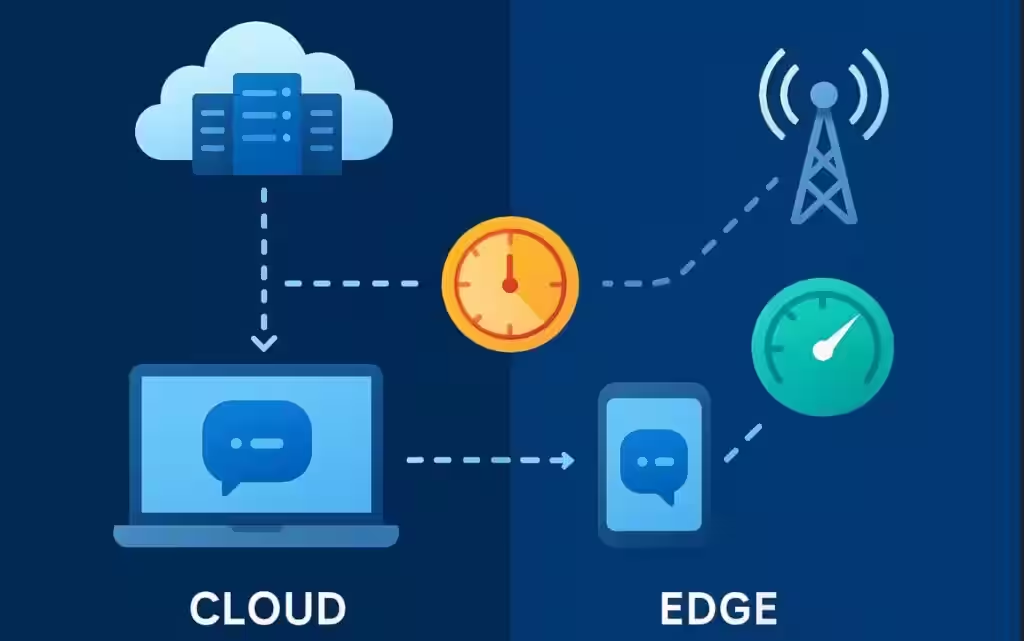

What Edge Computing Means in 2026?

Edge computing means processing data close to where it’s generated, not shipping everything off to a centralized cloud. The result? Latency drops by up to 90% compared to cloud-only architectures, and systems can keep functioning even when connectivity is spotty.

What’s changed in 2026 is the why behind adoption. It’s no longer just latency. It’s AI inference running locally on GPU-enabled devices. It’s regulators in Europe and Asia requiring that sensitive data never crosses certain borders. It’s the rise of TinyML, which lets compact machine learning models run on small edge devices with minimal power. The edge, in short, has grown up.

How were these 10 platforms selected?

Platforms were evaluated based on market adoption, enterprise readiness, 2025-2026 product developments, AI edge capability, and real-world use-case coverage. Vendor marketing copy was set aside in favor of analyst coverage, peer reviews on Gartner, and observable deployment patterns. The goal is an honest, useful comparison, not a repackaged press release.

The 10 Edge Computing Platforms

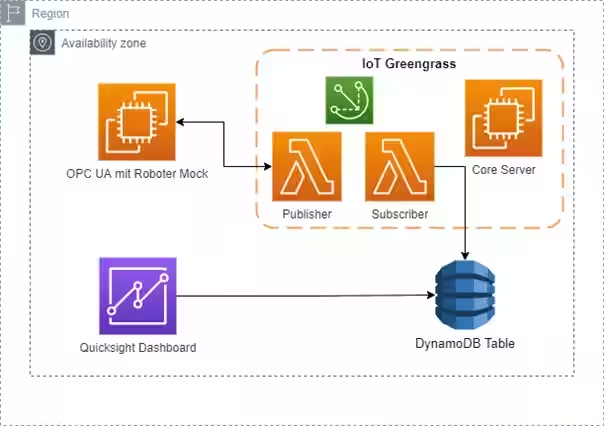

1. AWS IoT Greengrass + AWS Outposts

If your infrastructure already lives on AWS, this combination is probably the path of least resistance. And sometimes that’s the right call.

AWS Outposts brings EC2, ECS, EKS, RDS, and EMR directly on-premises. You get the full AWS experience at the edge, not a stripped-down version of it. Greengrass sits on top as the IoT-specific runtime, running Lambda functions and container-based components locally while staying in sync with the cloud. It keeps working even when connectivity drops, which matters enormously for factory floors and retail environments.

Outposts holds around 10% mindshare in hybrid cloud platforms as of early 2026. That’s real market penetration, not just marketing noise.

The catch? Two actually. First, the cost. Outposts hardware isn’t cheap, and you’re paying AWS fees on top of that. Second, lock-in is genuine. Everything here is tightly coupled to AWS. If your organization ever shifts cloud strategy, migrating off this stack will be painful.

Bottom line: Best choice if you’re already deeply on AWS and need cloud-service parity at the edge. Don’t choose it just because it’s familiar, though.

2. Microsoft Azure Stack Edge + Azure Arc

Azure’s edge story is really two products doing two different jobs, and the combination is genuinely compelling for Microsoft-native enterprises.

Azure Stack Edge is a 1U hardware appliance with built-in ML acceleration. It handles the physical edge layer. Azure Arc then extends management across on-prem, edge, AWS, and GCP from a single control plane. That cross-cloud governance capability is one of the more practical differentiators in the market right now.

Multiple analysts rated Azure Arc the strongest hybrid cloud solution going into 2026, and it holds a 16.3% mindshare in hybrid cloud platforms. Regulated industries in particular, think healthcare and financial services, are drawn to it because Azure’s compliance certifications carry over to the edge stack.

A real limitation worth knowing: Azure Stack Edge requires a minimum of four nodes to get started. That rules it out for smaller deployments. And if you’re not already in the Microsoft ecosystem, the learning curve is steep.

Bottom line: If you’re running Windows workloads or operating in a regulated industry with existing Azure investment, this is a natural fit. For everyone else, it’s probably overkill.

3. Google Distributed Cloud Edge

Google isn’t number one in the cloud. But its edge strategy is smarter than the overall market position suggests.

Google Distributed Cloud (GDC) runs GKE (Google Kubernetes Engine) clusters on-premise and at telco network sites. It’s purpose-built for organizations that need sovereign-grade compute, meaning data stays strictly within defined geographic or regulatory boundaries. With data localization laws tightening globally, that’s a meaningful feature, not just a checkbox.

The 5G and MEC angle is interesting too. Google has AT&T partnerships in place for multi-access edge computing, positioning GDC as a serious player for telcos building out network edge infrastructure.

Where it gets tricky: if you’re not in the Google ecosystem, integration is friction-heavy. And the AI-first workloads it’s optimized for require GPU-capable nodes that push the budget up quickly.

Bottom line: Strong pick for telcos, government agencies, and enterprises with serious AI inference needs and data sovereignty requirements. Less compelling outside that specific context.

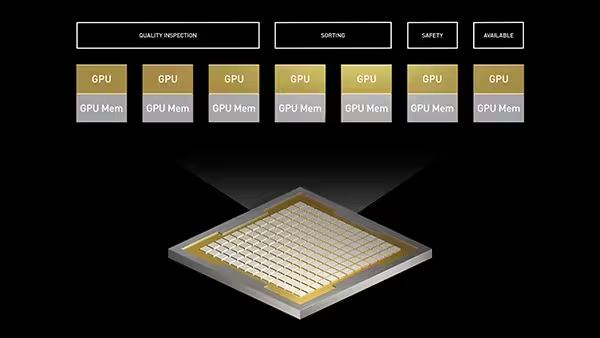

4. NVIDIA Fleet Command

Most edge platforms treat AI as a feature. Fleet Command treats it as the whole product.

It’s a browser-based control plane that manages AI applications across thousands of edge devices simultaneously. Zero-trust security architecture is built in from the start, not bolted on later. MIG (Multi-Instance GPU) support lets a single physical GPU serve multiple workloads in isolation, which is a practical cost reducer for large deployments.

Real-world users include KION Group (warehouse automation) and Aetina (AI edge hardware). The use cases cluster around computer vision, predictive maintenance, robotics, and increasingly, edge generative AI.

The limitation is the scope. Fleet Command doesn’t pretend to be a general-purpose edge platform. If your edge workloads don’t revolve around GPU inference, you’ll be paying for capability you never use.

Bottom line: For AI-first enterprises deploying at scale, this is the most purpose-fit tool available in 2026. For anything else, it’s not the right starting point.

5. Cloudflare Workers

Cloudflare Workers has earned the reputation of being the most mature edge compute platform for developers in 2026. That’s a bold claim, but the product catalog backs it up.

Workers run code globally across Cloudflare’s network with sub-millisecond response times. The expanded ecosystem now includes D1 (a relational database at the edge), Durable Objects for stateful computing, R2 object storage, and KV for fast key-value lookups. Oh, and a partnership with NVIDIA means AI inference at the edge is now on the table too.

What makes Workers genuinely different is what you don’t have to manage. No servers. No infrastructure decisions. No PhD in distributed systems required. You write JavaScript (or TypeScript, or Rust), deploy globally, and pay per request. For SaaS companies and API-heavy applications, that model is remarkably clean.

It’s not AWS Outposts. It’s not competing with data center hardware. But if you’re building global-facing web applications and need logic executing close to your users, Workers is the default answer in 2026.

Bottom line: Best serverless edge platform for developers who want global low-latency without operational complexity.

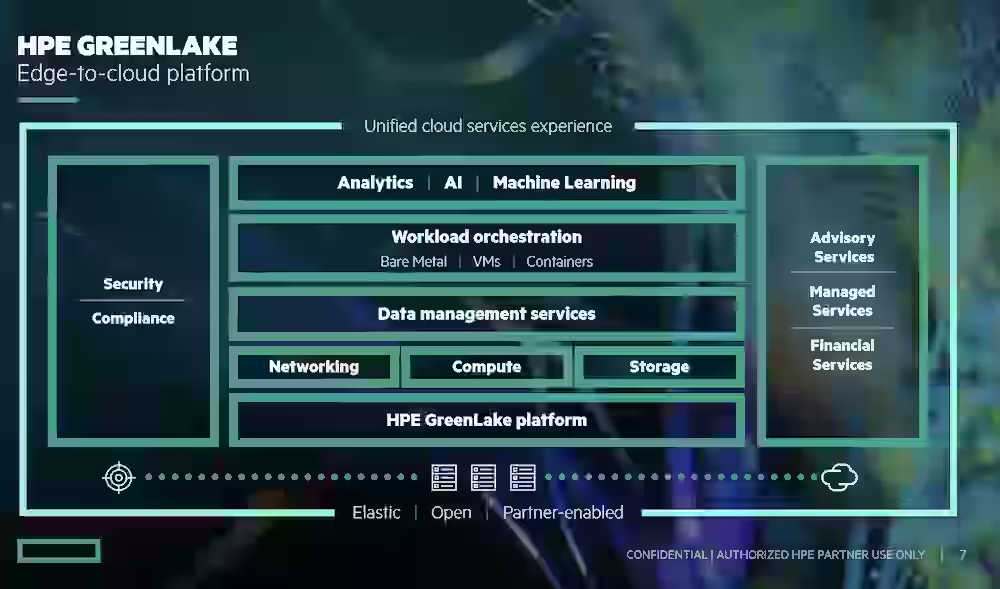

6. HPE GreenLake Edge

Here’s a scenario that plays out constantly in enterprise procurement: the IT director wants edge infrastructure, but the CFO doesn’t want another hardware asset on the balance sheet. HPE GreenLake was built precisely for that tension.

GreenLake delivers edge hardware as a consumption-based service. You pay for what you use, not a fixed hardware purchase. That model has significant traction in telecom, manufacturing, and healthcare, where budget cycles don’t always align with infrastructure needs.

The March 2026 news is worth calling out specifically. At NVIDIA GTC 2026, HPE announced the AI Grid solution, which connects AI factories and distributed inference clusters across edge sites. NVIDIA AI Enterprise is available directly on GreenLake now. HPE’s industrial edge business is forecast at 25% revenue growth, and the AI Grid announcement is a big reason why.

If there’s a downside, it’s that consumption pricing can get unpredictable at scale. The total cost over three to five years sometimes ends up higher than a direct hardware purchase.

Bottom line: Strong choice for large enterprises that need enterprise-grade edge hardware without ownership, especially if AI inference is part of the roadmap.

7. SUSE Edge

Some organizations just don’t want to hand their edge infrastructure to a hyperscaler. SUSE Edge (evolved from the Rancher acquisition) exists for exactly that reason.

It’s a full-stack Kubernetes platform built for constrained edge environments, hardened distribution, lifecycle management, immutable infrastructure, and GitOps-based automation for OS, Kubernetes, and applications together. That last part matters for industrial environments where human-in-the-loop updates are too slow and too risky.

Telcos and industrial operators running heterogeneous hardware across dozens of sites find the GitOps model genuinely useful. It’s not just convenient, it’s operationally safer.

The honest trade-off: SUSE Edge requires real Kubernetes expertise in-house. It’s not a managed service. It’s priced for large organizations, and smaller teams without dedicated platform engineering will struggle to get value from it quickly.

Bottom line: The right call for enterprises and industrial operators who need open Kubernetes at the edge and want to avoid hyperscaler dependency.

8. AWS IoT Greengrass (Standalone)

Listing this separately from Outposts is intentional because the audience is genuinely different.

Greengrass standalone is for IoT device managers, not data center operators. You’re running Lambda functions and container components on factory sensors, retail kiosks, or connected devices. The key capability is offline operation: Greengrass keeps processing locally when cloud connectivity drops and syncs when it’s back.

For manufacturing plants, smart retail environments, and logistics operators with connected fleets, this is one of the more battle-tested options available. It doesn’t need the full Outposts stack to be useful.

The constraint, as always with AWS, is that it ties your IoT architecture to AWS services. If that’s fine, Greengrass standalone is a solid, well-documented choice.

Bottom line: Best IoT edge runtime for teams already on AWS who need reliable local inference and cloud sync without a full data center deployment.

9. Akamai EdgeWorkers

Akamai came at edge computing from a different direction than everyone else on this list. It started with content delivery and extended into compute. EdgeWorkers lets you run JavaScript and custom logic across Akamai’s globally distributed network, executing functions at different stages of the HTTP request lifecycle.

For large websites, media companies, and e-commerce platforms, that’s a natural extension of a relationship they probably already have. You’re not adopting a new vendor, you’re expanding what your existing Akamai contract can do.

It’s not a general infrastructure play. It doesn’t do IoT. It doesn’t manage industrial workloads. But for businesses where web performance is the primary concern, EdgeWorkers fills a specific gap well.

Bottom line: Strong option for web-scale businesses optimizing HTTP performance, especially those already using Akamai for CDN.

10. ZEDEDA

Industrial edge infrastructure is rarely clean. You’ve got hardware from five different vendors, some of it five years old, running in environments where a software update can’t interrupt production. ZEDEDA was built for that reality.

It sits on top of heterogeneous hardware stacks and provides a single orchestration layer with enterprise-grade, zero-trust security from day one, not as an add-on. It’s appeared on multiple 2026 edge watchlists specifically for its open architecture and mature security model.

The hyperscalers can’t easily solve this problem because their platforms generally prefer their own hardware and ecosystems. ZEDEDA doesn’t care what hardware you’re running. That’s the differentiator.

Bottom line: The industrial operator’s choice when the hardware fleet is messy, mixed, and security-critical.

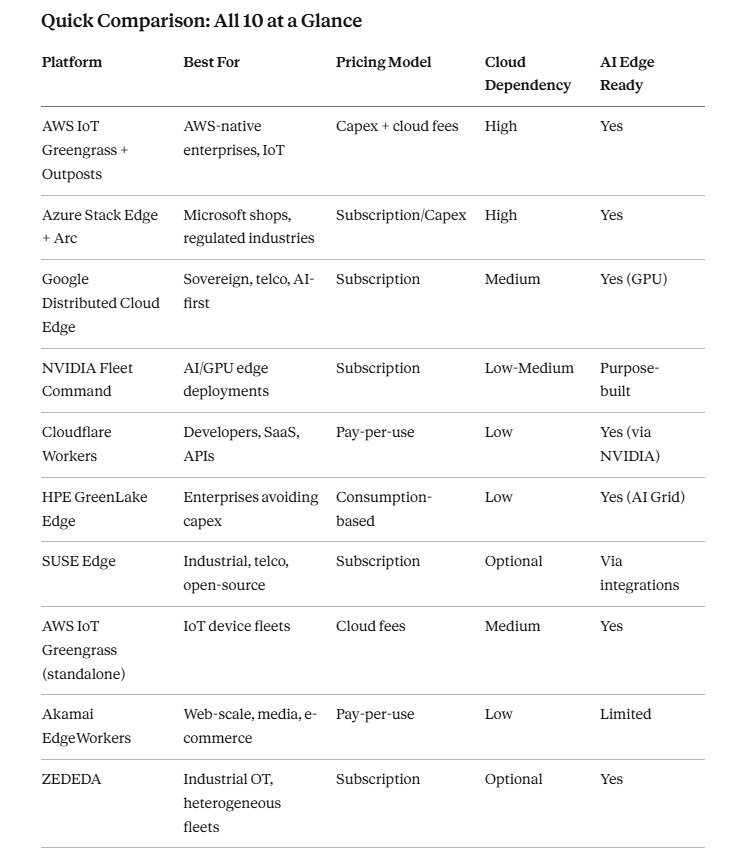

How to Choose Edge Computing Platforms?

Start with your use case, not the vendor. Ask these questions in order:

- Is this primarily an IoT problem, an AI inference problem, or a web performance problem?

- Do you have an existing cloud provider relationship that would influence integration?

- Does your team have Kubernetes expertise, or do you need a managed experience?

- Are there data sovereignty requirements that restrict where data can go?

- What’s the budget model: capex tolerable, or consumption preferred?

Most platform decisions come down to two or three finalists after those five filters. The comparison table at the top of this post is a good starting point.

Trends Worth Watching This Year

Three things are reshaping edge in 2026 beyond what any single platform does:

AI inference at the perimeter is the big one. GPU-enabled edge nodes are becoming standard, and the conversation around where to run inference (cloud vs. edge) is shifting decisively toward hybrid approaches.

Data sovereignty enforcement is accelerating globally. The EU AI Act and India’s DPDP are just two examples of regulations pushing localized processing from optional to mandatory for certain data types.

Edge-as-a-service pricing models, like HPE GreenLake, are gaining traction because enterprises are getting more comfortable with operational versus capital expenditure for infrastructure.

- Ultimate Guide to Fintech Tools for Businesses 2026 - April 3, 2026

- Best Password Managers for Personal and Business Use2026 - March 30, 2026

- Top 10 Edge Computing Platforms and Solutions - March 29, 2026